Exploring Network Architecture: Layers and TCP Reliability Explained

In the digital age, understanding the fundamentals of networking is crucial, whether you're a developer, an IT professional, or simply a tech enthusiast. Networking forms the backbone of modern communication systems, enabling the transfer of data across the globe. This article will delve into the basics of networking, explore the different layers of the network stack, and provide a deep dive into how TCP (Transmission Control Protocol) ensures reliable communication over the internet.

The OSI Model: An Overview

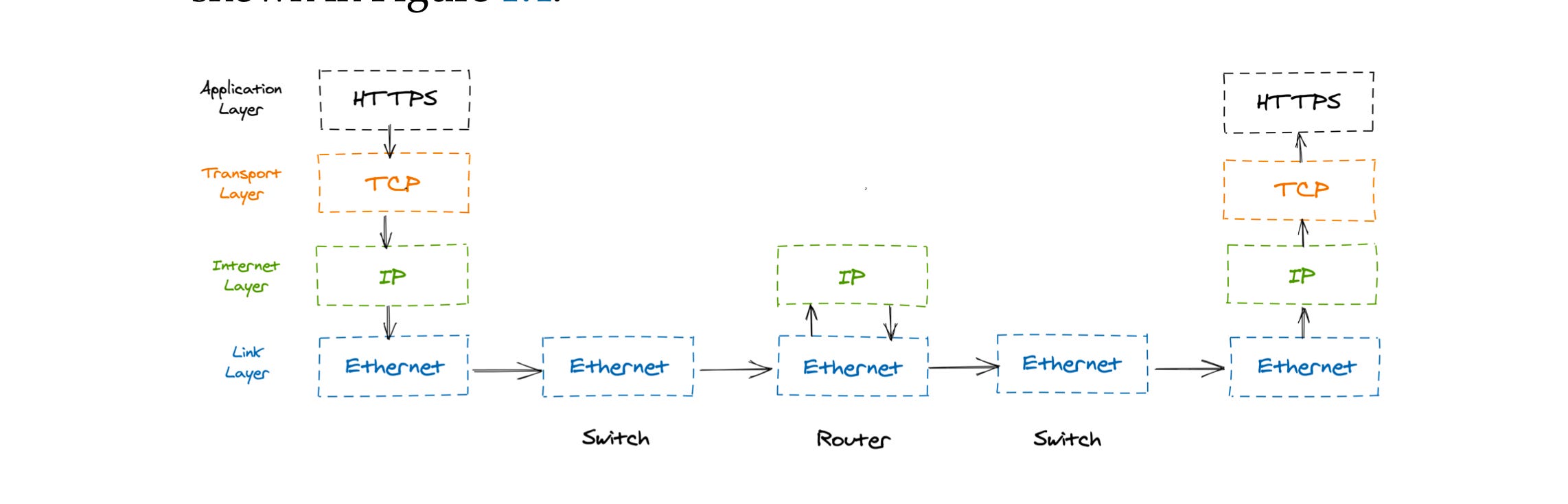

The Open Systems Interconnection (OSI) model is a conceptual framework used to understand and implement networking protocols in seven layers. Each layer has a specific function and communicates with the layers directly above and below it. However, for the purpose of this article, we will focus on the most commonly referenced layers: Application, Transport, Network, and Link Layer.

The Network Layers

1. Application Layer

The Application Layer is the topmost layer in the OSI model and is responsible for providing network services directly to end-users. This layer includes protocols that facilitate communication between software applications and the network.

HTTP (Hypertext Transfer Protocol): HTTP is the foundation of data communication on the World Wide Web. It allows for the fetching of resources, such as HTML documents, enabling the loading of web pages in browsers.

DNS (Domain Name System): DNS translates human-readable domain names into IP addresses that computers use to identify each other on the network. Without DNS, navigating the internet would be much more cumbersome.

2. Transport Layer

The Transport Layer ensures that data is transferred from one point to another reliably and accurately. It controls the flow of data, error checking, and recovery.

TCP (Transmission Control Protocol): TCP is a connection-oriented protocol that ensures data is delivered reliably and in order. It establishes a connection between the sender and receiver before data is transferred.

3. Network Layer

The Network Layer is responsible for data routing, forwarding, and addressing. It determines how data packets are sent from one device to another across multiple networks.

IP (Internet Protocol): IP is responsible for addressing and routing packets of data so that they can travel across networks and arrive at the correct destination. It defines IP addresses, which are unique identifiers for devices on a network.

4. Link Layer

The Link Layer, also known as the Data Link Layer, is responsible for the physical transmission of data on the network. It deals with the protocols that define how data is formatted for transmission over physical media, such as cables or radio waves.

Switches and Routers: Switches operate at the Link Layer and are used to connect devices within a single network, directing data to its correct destination. Routers, on the other hand, operate at the Network Layer and are responsible for forwarding data between different networks.

Reliable Communication Using TCP

TCP is designed to provide reliable communication over the inherently unreliable medium of the internet. It achieves this through a combination of mechanisms that ensure data is delivered correctly, in order, and without overwhelming the network or the receiving end.

The Three-Way Handshake

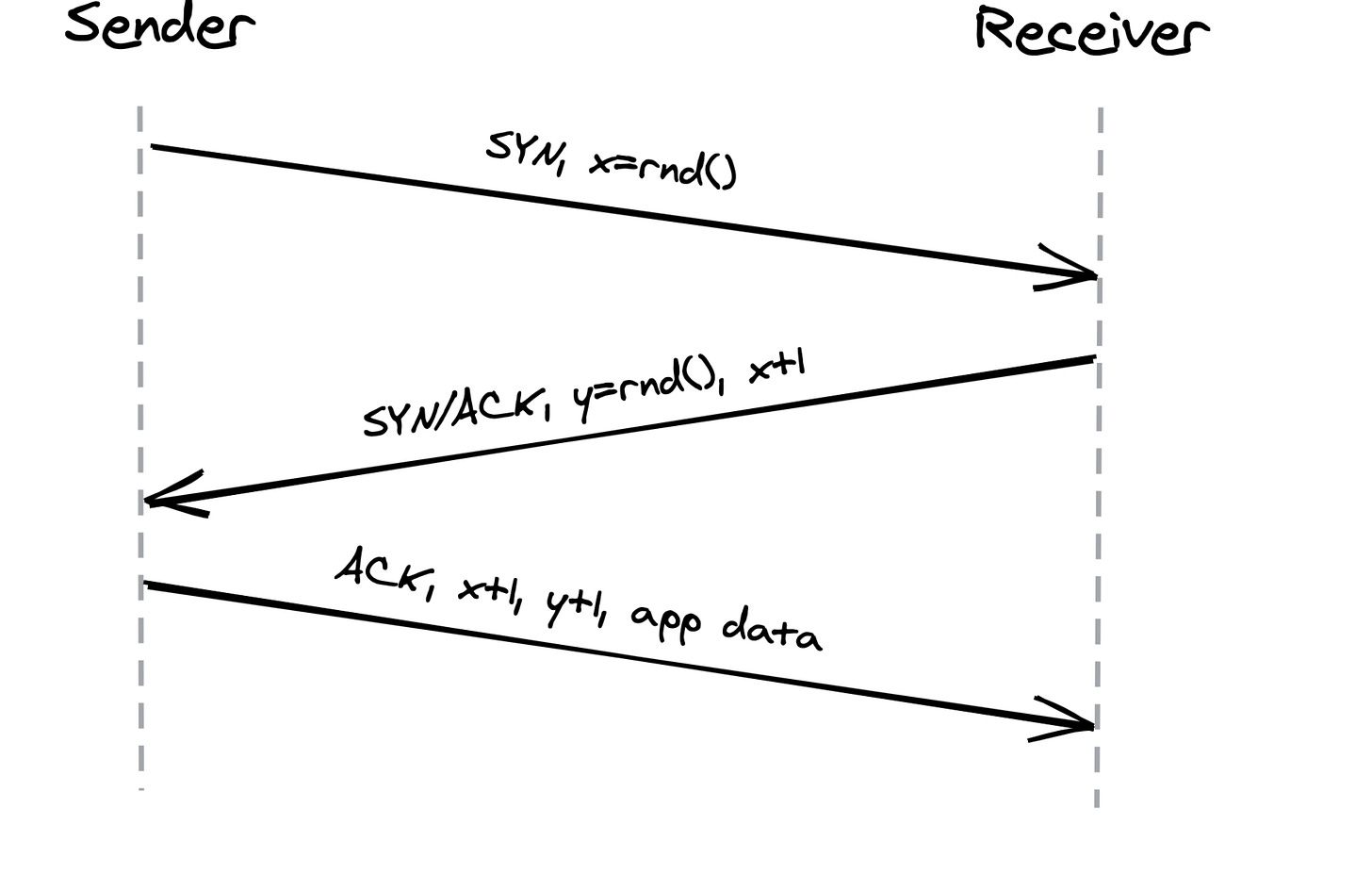

Before any data can be exchanged, TCP establishes a connection between the sender and receiver using a process known as the Three-Way Handshake. This process involves three steps:

SYN (Synchronize): The client sends a SYN packet to the server, requesting to establish a connection. This packet contains an initial sequence number, which is used to keep track of the bytes in the data stream.

SYN-ACK (Synchronize-Acknowledge): The server responds with a SYN-ACK packet, acknowledging the client's request and providing its own sequence number.

ACK (Acknowledge): The client sends an ACK packet back to the server, acknowledging the server's sequence number. At this point, a reliable connection is established, and data transfer can begin.

Data Transfer Over TCP

Once the connection is established, data transfer can begin. TCP ensures that data is sent in segments, each with a sequence number, allowing the receiver to reorder segments if they arrive out of order. TCP also uses acknowledgments to confirm the receipt of data. If a segment is lost during transmission, the receiver will not acknowledge it, prompting the sender to retransmit the missing segment.

Flow Control in TCP

Flow control is a crucial aspect of TCP that prevents the sender from overwhelming the receiver with too much data at once. This is achieved through the use of a receive buffer on the receiver's side.

When the receiver's buffer is full, it communicates this to the sender by adjusting the window size in the acknowledgment packets. The window size indicates how much more data the receiver can handle at any given time. The sender, in turn, adjusts its sending rate based on this feedback, ensuring that it does not send more data than the receiver can process.

Flow control is analogous to rate-limiting in APIs, where the flow of requests is controlled to prevent overwhelming a service. However, in TCP, this mechanism operates at the connection level, ensuring smooth and efficient communication between devices.

Congestion Control in TCP

Without effective congestion control, the internet as we know it would be prone to frequent slowdowns, packet loss, and overall inefficiency.

What is Congestion Control?

Congestion control is the mechanism by which TCP regulates the flow of data between a sender and a receiver to prevent overwhelming the network. The internet is a shared medium with finite bandwidth. When multiple devices try to send large amounts of data simultaneously, the network can become congested, leading to delays, packet loss, and degraded performance. TCP's congestion control algorithms aim to maximize network throughput while minimizing congestion.

The Core Concepts of TCP Congestion Control

TCP congestion control is based on the idea of adjusting the rate at which data is sent based on feedback from the network. The primary tool for this is the congestion window (cwnd), which limits the number of unacknowledged packets that can be in transit at any given time. Several key phases and strategies define how TCP manages congestion:

Slow Start

Congestion Avoidance

Fast Retransmit and Fast Recovery

Additive Increase/Multiplicative Decrease (AIMD)

Let's break down each of these concepts in detail.

1. Slow Start

Slow Start is the initial phase of TCP congestion control. When a TCP connection is first established, the sender has little information about the network's capacity. To avoid overwhelming the network, TCP starts by sending a small amount of data, typically one Maximum Segment Size (MSS). The congestion window (cwnd) begins with a value of 1 MSS.

As each acknowledgment (ACK) is received, the cwnd doubles, allowing the sender to transmit more data. This exponential growth continues until a packet loss is detected or the congestion window reaches a predefined threshold called the slow start threshold (ssthresh).

The purpose of Slow Start is to quickly ramp up the transmission rate while being cautious not to flood the network prematurely. However, the exponential growth in cwnd can lead to congestion if not checked, which brings us to the next phase.

2. Congestion Avoidance

Once the congestion window reaches the slow start threshold, TCP transitions from Slow Start to Congestion Avoidance. In this phase, the growth of the congestion window slows down to a linear rate. Instead of doubling with each acknowledgment, the cwnd increases by one MSS for each round-trip time (RTT).

This linear growth is more cautious, aiming to probe the network's capacity without causing congestion. The goal is to gradually increase the transmission rate until the network reaches its limit.

3. Fast Retransmit and Fast Recovery

Packet loss is a key signal of network congestion in TCP. When a packet is lost, the receiver cannot acknowledge it, leading the sender to infer that the network is congested. TCP has mechanisms to handle this situation efficiently through Fast Retransmit and Fast Recovery.

Fast Retransmit: If the sender receives three duplicate ACKs (acknowledgments for the same packet), it assumes that a packet has been lost and retransmits the missing packet immediately, without waiting for a timeout. This quick response helps minimize the impact of packet loss on transmission.

Fast Recovery: After retransmitting the lost packet, TCP enters Fast Recovery instead of returning to Slow Start. In Fast Recovery, the congestion window is halved (multiplicative decrease), and then linear growth resumes. This allows the sender to maintain a relatively high transmission rate while recovering from the packet loss.

4. Additive Increase/Multiplicative Decrease (AIMD)

The AIMD principle underpins TCP's approach to congestion control. It combines cautious growth with aggressive backoff to maintain a balance between network utilization and congestion prevention.

Additive Increase: During Congestion Avoidance, the cwnd increases linearly by one MSS per RTT, reflecting the additive increase. This steady growth allows TCP to adapt to increasing available bandwidth gradually.

Multiplicative Decrease: When packet loss occurs, the congestion window is cut in half, representing the multiplicative decrease. This sharp reduction helps alleviate network congestion quickly, reducing the likelihood of further packet loss.

AIMD ensures that TCP behaves responsibly in a shared network environment. The additive increase allows the sender to explore available bandwidth, while the multiplicative decrease quickly reduces the transmission rate when congestion is detected.

Additional TCP Congestion Control Algorithms

Over time, several variations of TCP congestion control algorithms have been developed to address specific network conditions and improve performance. Some of the notable algorithms include:

TCP Reno: The standard algorithm that includes Slow Start, Congestion Avoidance, Fast Retransmit, and Fast Recovery.

TCP Tahoe: An earlier version of Reno that doesn't include Fast Recovery. After detecting packet loss, Tahoe reduces the congestion window to one MSS and re-enters Slow Start.

TCP New Reno: An enhancement over Reno that improves the Fast Recovery phase by dealing better with multiple packet losses within a single window of data.

TCP Vegas: An alternative algorithm that emphasizes proactive congestion detection by measuring RTTs and adjusting the sending rate before packet loss occurs.

CUBIC: A modern congestion control algorithm designed for high-bandwidth and long-distance networks. It uses a cubic function to adjust the congestion window, allowing for more aggressive probing of available bandwidth.

Why Congestion Control is Critical

Congestion control is not just about optimizing network performance; it also ensures fairness and stability across the internet. Without effective congestion control, a single sender could monopolize network resources, leading to poor performance for others. Furthermore, uncontrolled congestion could cause network collapse, where excessive packet loss and retransmissions lead to a vicious cycle of declining throughput and increasing delays.

By dynamically adjusting the rate at which data is sent based on network feedback, TCP congestion control algorithms help maintain a balance between efficient data transfer and network stability. This balance is crucial for the internet's scalability and reliability, enabling billions of devices to communicate effectively.

Conclusion

Understanding the different layers of the network and how TCP ensures reliable communication is fundamental to grasping how data travels across the internet. From the initial three-way handshake to the mechanisms of flow and congestion control, TCP plays a crucial role in maintaining the integrity and efficiency of data transfer.

In a world where reliable communication is critical, whether for streaming video, sending emails, or browsing the web, the principles outlined here provide a solid foundation for anyone interested in networking. By delving into these concepts, we gain insight into the complexities and nuances of how the internet, a seemingly simple entity, truly functions.

References :

https://understandingdistributed.systems/